This talk was presented virtually at eRum 2020 by Appsilon engineer Krystian Igras. Here is a direct link to the video.

Why Should We Explain Black Box ML Models?

A vast majority of state-of-the-art ML algorithms are black boxes, meaning it is difficult to understand their inner workings. The more that algorithms are used as decision support systems in everyday life, the greater the necessity of understanding the underlying decision rules. This is important for many reasons, including regulatory issues as well as making sure that the model has learned sensible features. For instance, it might be that a particular ML algorithm discriminates against a minority group for an arbitrary reason. It is difficult to catch this sort of problem if your model is a black box. I have created an R package (xspliner) that helps create explainable surrogate models to better understand black box ML algorithms.

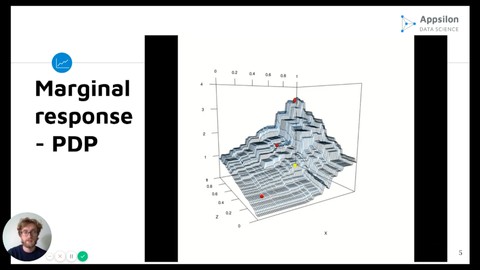

Marginal response and PDP curves

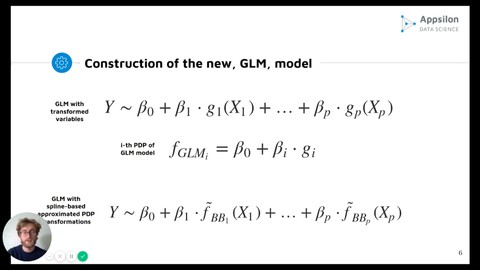

One of the most promising methods to explain black box ML models is to build an explainable surrogate model. This can be achieved by inferring Partial Dependence Plot (PDP) curves from the black box model and building Generalized Linear Models based on these curves. The advantage of this approach is that it is model agnostic, which means you can use it regardless of what methods you used to create your model.

Construction of Generalized Linear Model with spline-based approximated PDP transformations

In this presentation, you will learn what PDP curves and GLMs are and how you can calculate them based on black box models. I’ll also show you a custom visualization of how PDP curves are constructed. We will then take a look at a credit-scoring use case in which we take the GBM Model and treat it as a surrogate to create an explainable GLM Model. Finally, the new model is used to create a user-friendly credit scoring tool that also allows the creditor to receive a detailed report summing up the final decision whether to grant credit or not. Want to use xspliner? It is available on CRAN!

Learn More

- Want to learn how to create a computer vision model within an R environment? Watch Jędrzej Świeżewski’s eRum/useR presentation on fast.ai in R.

- Want to learn how to write high-quality, production-ready R code? See Marcin Dubel’s eRum/useR presentation on Production-Ready R Code here.

- Video Tutorial: How to Create and Customize a Simple Shiny Dashboard

- Find more Appsilon Data Science tutorials here.

Does your company need help with enterprise data analytics, machine learning, or Shiny dashboards? Reach out to us at hello@wordpress.appsilon.com.