AI for assisting in natural disaster relief efforts: the xView2 competition

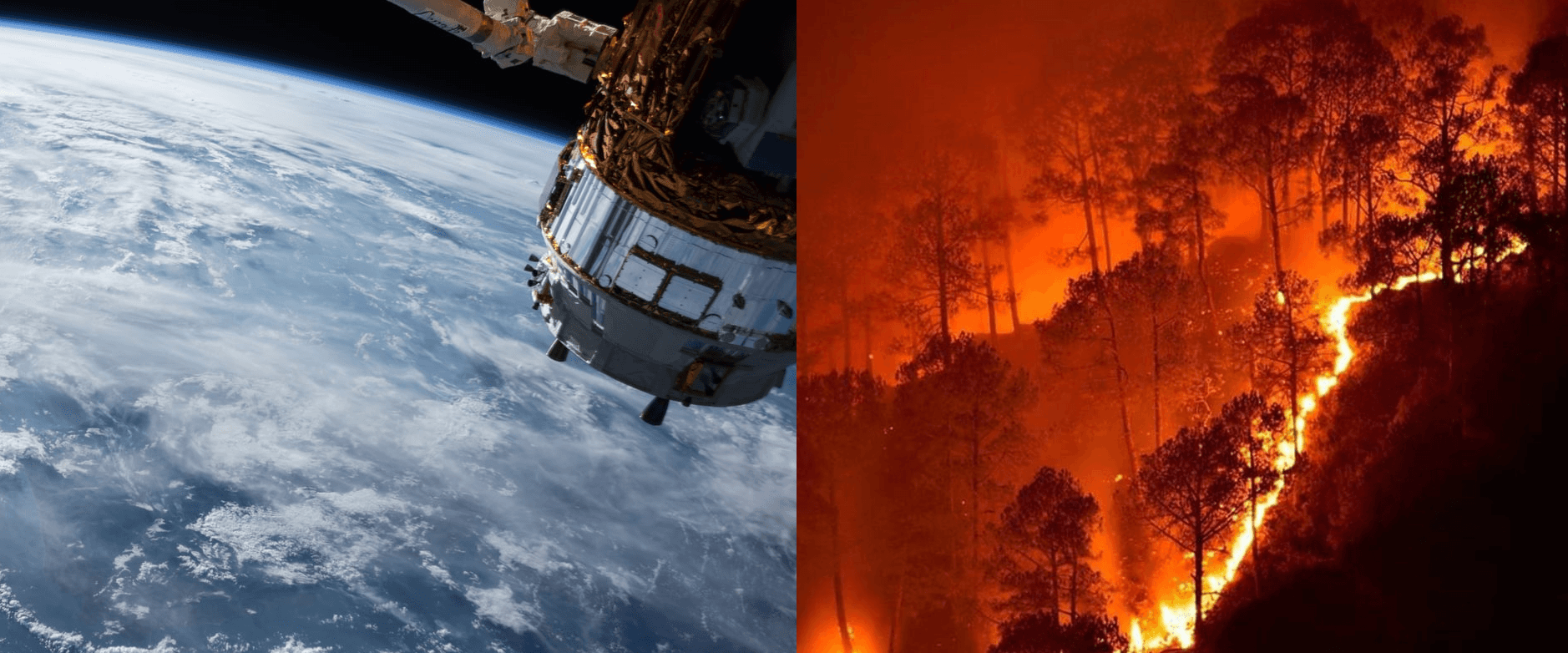

Satellite imagery combined with AI is a game-changing tool not only in many business scenarios but also in humanitarian efforts. The xView2 competition makes this a reality. It challenges participants to develop a computer vision model for supporting Humanitarian Assistance and Disaster Recovery organizations. In this post, I would like to give you an overview of the competition, what makes it special, and share why we decided to take part in it.

Overview of the problems and rationale for the competition

Climate change is exacerbating the risk of natural disasters with frequent, unpredictable changes in weather patterns. Logistics, resource planning, and damage estimation are difficult after the disaster hits and can be very expensive. We need good data to maximize the effectiveness of such efforts, especially in resource-constrained developing countries. Currently, the process is labor-intensive with specialists analyzing aerial and satellite data from state, federal, and commercial sources to assess the damage. Moreover, the data itself is often scattered, missing, or incomplete.

The xView2 competition addresses both of these issues. On the one hand, it builds on a novel satellite imagery dataset xBD. xBD provides data on 8 different disaster types spanning 15 countries and covers an area of 45,000 square kilometers. Moreover, it introduces the Joint Damage Scale for labeling building damage, which provides guidance and an assessment scale to label building damage in a satellite image.

The xView2 dataset sources: the basic dataset includes images from a volcano eruption in Guatemala, hurricane Harvey, tsunami in Palu, and an earthquake in Mexico among others.

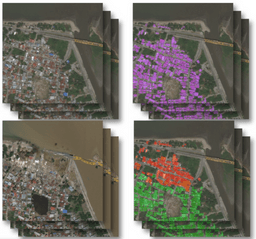

pre- and post-disaster satellite images

Whilst the xBD dataset provides complete data on disasters, we still need to reduce the amount of human labor in assessing the damage. This is an excellent opportunity to employ AI and in particular deep learning techniques. The sheer amount of data generated after the disaster makes it virtually impossible even for a team of experts to effectively estimate the costs and plan relief efforts. However, a good algorithm can excel in this environment and not only provide the assessment faster but also more accurately than humans.

We have experience handling Satellite Imagery and we have seen its benefits in different sectors both public and commercial. We are excited to be working on yet another application of such data as it has several benefits:

- It is frequent and timely (new data is available every day)

- Image quality is high (30x30cm of surface per pixel)

- Images go beyond visible light into other parts of the electromagnetic spectrum, which opens new opportunities for analysis unavailable to the human eye

- Historical data is easily available for comparisons across time

The 2019 edition of the xView challenge builds on last year’s competition where thousands of submissions were benchmarked to choose the best models for predicting bounding boxes for a wide variety of different types of objects. We are now focusing on buildings and neural networks (one of the tools of deep learning) are a natural choice for this purpose. Teams from around the world are presented with a number of images showing inhabited areas before and after the disaster, as well as training data with labeled buildings and the level of damage on a 0-4 scale (unaffected to destroyed) developed by experts. The training data allows for building the model, which can then be used for new images to automatically conduct building assessment. Therefore, participants are expected to provide two predictions: one for the location of the buildings in an image and another for the damage level of the found buildings. One of the possible approaches and one that we chose is to develop a separate ML model for each of these parts.

Pre and post images, as well as the location of buildings (purple) and sustained damage (color-coded) – one of our models in action

Overcoming challenges

Whilst the xView2 competition is likely to close the two major gaps in disaster relief planning and response – lack of suitable data for training machine learning models as well as labor intensiveness of the assessment process – we discovered a number of key challenges when working on the dataset:

- Buildings pre and post-damage are photographed at different angles, which makes the task more difficult.

- Some of the buildings are located in densely inhabited areas, whilst others are free-standing houses. They also differ in size ranging from simple huts to large shopping centers.

- The data spans different types of disasters. This is an advantage for disaster relief purposes and the ultimate goal of the competition but constitutes an additional challenge for participants.

- The data is unbalanced – the dataset mostly contains undamaged buildings.

- The resolution of the images had been artificially reduced by the competition organizers, even though higher-quality data is readily available for some of the covered regions.

- The dataset does not contain non-visible light channels that can be helpful in achieving better accuracy (e.g. by helping detect water using NDVI value).

- We need to balance the depth and complexity of the network with training efficiency and cost. It is not easy to train a neural network on a vast amount of data in a cost-effective manner.

Our goal and perspective on the competition

Since launching our Data for Good initiative we have explored various avenues of engaging with the different actors working at the front of the fight for our planet. We completed a project with the International Institute for Applied Systems Analysis (IIASA), welcomed government officials from Malaysia, engaged with the biodiversity community, and raised awareness about the movement through various channels, including Medium. Aside from the duty to develop AI solutions for the needs of humanity, we entered the contest because we are keen on ML Vision and Satellite Imagery.

Some of the participants of the previous edition of the competition have a head start, but we’re hoping to get a good result and we are proud to be a part of this community. We believe that the AI for Good movement is at the brink of becoming mainstream and xView2 is helping to make this a reality. We will share more technical details about the models we are currently developing in January 2020 upon the conclusion of the contest.

Thanks for reading. Follow me on Twitter @marekrog. Questions about the models? Ask in the comments section below.